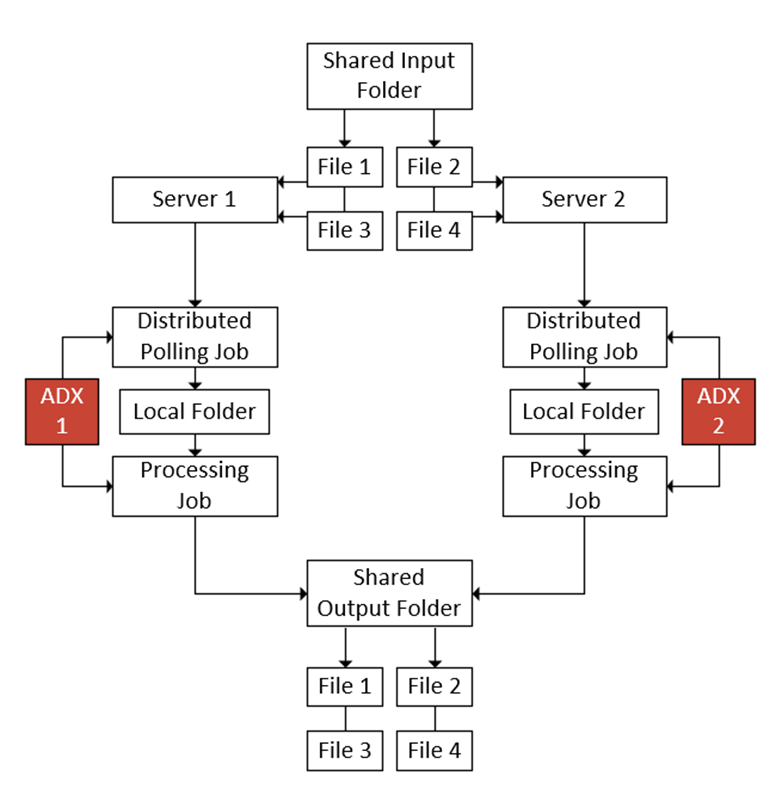

In Autobahn, it is possible to have multiple copies sharing a workload. This can be useful for sudden, extremely high workloads where you might want to unify the processing power of multiple servers, provide faster reaction times to input/output for users or even as a measure of assurance in case one of the servers go down.

You will find a video at the bottom of this article going into more detail on the setup.

It works by each server pulling a pre-defined number of documents and feeding it to the jobs you wish to use to process them.

Setting this up is very straight forward. You can do this with as many servers as you have licenses for, but for simplicities sake, I will only be doing it with 2 for this article.

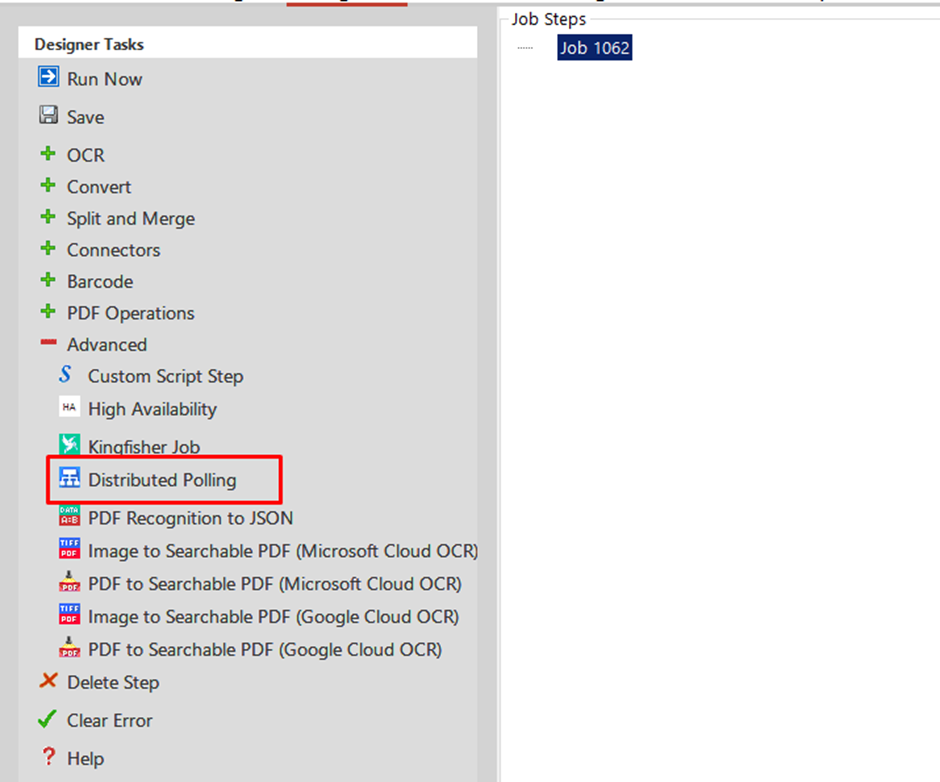

You want to start by making a job on all your Autobahn DX instances and adding the “Distributed Polling” job step from the “Advanced” tab.

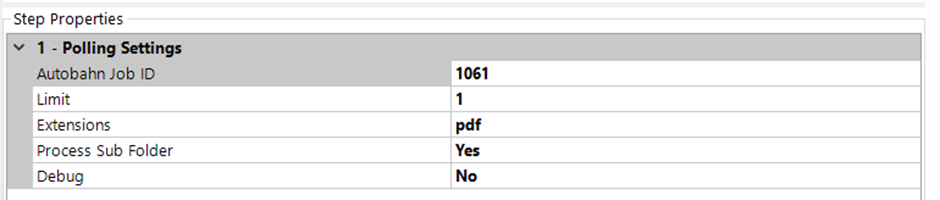

Once the job step has been added, you will see there are 5 Properties. The first property we will leave blank for now as we will fill it with the job ID of the job that will perform the actual processing.

The “Limit” property is how many documents you want each instance to pull at a time. Again, for demonstration purposes, I will be setting it at 1.

“Extensions” is what file types you wish to include. I will be using “PDF” only for this, but you can add any file format.

The rest of the properties are self-explanatory.

For the “Source Folder” and “Destination Folder”, these will need to be setup as follows:

Source Folder: Shared Input Location (Folder that all copies of Autobahn will pull from)

Destination: Local folder unique to this job

Once you have set these up, it is time to make the job. This job can be what ever you need. In this case, I will just be using an OCR step.

The “Source Folder” for this job will be the previously set unique folder and the “Destination Folder” will be the Shared Output Location (Folder that all copies of Autobahn will deposit to).

Once the processing job has been made, take a note of it’s Job ID and add that into the “Autobahn Job ID” property in the Distributed Polling job step you made previously.

Once that has been done, ensure that all jobs are set to a schedule and then you should be done!

Some things to note:

- The Polling Job and the Processing Job should have the same schedule settings

- The balance between the Polling “limit” property and the schedule timings will likely require a bit of trial and error to get perfect